If you receive this message when working with Lawson Security Administrator:

“The version of this file is not compatible with the version of Windows you’re running. Check your computer’s system information to see whether you need an x86 (32-bit) or x64 (64-bit) version of the program.”

Corrective action

The LSA tool should be able to run on both 32-bit and 64-bit systems. Take these steps to check to see if this message is related to a different operating system issue.

- Restart your Operating system.

- Log in to computer as an Administrator> Run LSA installer as an Administrator > Proceed with LSA installation.

- If the error message occurs again, the LSA download file might be corrupted. Download the latest version of the security administration tool and repeat step 2.

- If you continue to get the same error, right-click the LSA installation program > Select Troubleshoot Compatibility > Click Try Recommended Setting > Click Start the Program > Proceed with the LSA installation.

- If the installation is still not successful, contact Infor Support.

Modern enterprise resource planning (ERP) transformations promise efficiency—but many organizations are discovering hidden costs after go-live. In a recent ERP Today article published by content director Radhika Ojha, she explains why legacy systems, often left running “just in case,” are quietly undermining those gains. After migrating to platforms like SAP S/4HANA or the cloud, companies frequently keep old systems alive for audits or legal access. These “zombie systems” drive up costs, consume resources, and introduce security risks—especially when they’re no longer patched or actively managed. Instead of delivering savings, they create ongoing technical debt. A smarter approach starts with separating operational data (needed daily) from historical data (kept for compliance). Moving decades of old data into modern systems can slow performance and inflate cloud costs. Tools like Kyano Outboard help by offloading historical data into flexible storage while keeping it accessible for reporting—reducing strain on core systems. Even more impactful is eliminating the need to keep entire legacy applications running. Solutions like Kyano Datafridge allow organizations to retire the application layer entirely while preserving data in secure, compliant, browser-based archives. This ensures auditors and users can still access records without maintaining outdated infrastructure. The payoff is significant. Companies that actively decommission legacy systems can reduce total cost of ownership by up to 80%, while improving security and freeing IT teams to focus on innovation. Ojha’s key takeaway is ERP transformation doesn’t end at go-live. A complete strategy includes a “sunset phase” for legacy systems—ensuring organizations preserve their data history without letting it hold back their future.

Enterprise data strategy is shifting from single platforms to interconnected ecosystems. In his recent Forbes article, Robert Kramer from Moor Insights & Strategy explains that modern enterprises can no longer rely on one centralized system—like traditional enterprise resource planning (ERP) platforms—to manage today’s complex, AI-driven data environments. Historically, enterprise data lived inside tightly integrated ERP systems built for accuracy, auditability and transactional stability. While reliable, these architectures were designed for retrospective reporting and struggled to meet the growing demand for real-time insights. As digital channels, mobile applications and sensor data expanded, organizations adopted distributed processing tools and cloud platforms to handle higher data volume, velocity and variety. But this evolution also introduced new complexity. Multi-cloud environments, overlapping tools and fragmented data pipelines have created operational sprawl. As a result, raw performance is no longer the key differentiator among enterprise data platforms. Instead, the real value lies in the ecosystem surrounding them—how effectively systems integrate, share governance, maintain consistent definitions and enable secure collaboration across environments. Today’s enterprise data platforms act as foundational layers within broader ecosystems that include analytics engines, streaming services, artificial intelligence (AI) tools and governance frameworks. Vendors may offer unified platforms or modular services, but success ultimately depends on how well these components work together. The rise of AI makes this even more critical. As automated systems increasingly influence business decisions, strong governance, lineage tracking and data quality controls must be embedded across the entire ecosystem. Ultimately, Kramer argues that IT leaders must shift their mindset—from choosing a single platform to designing and managing a resilient data ecosystem that can scale, adapt and deliver trusted insights.

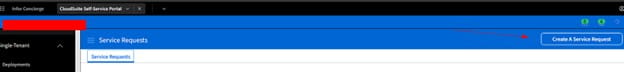

Follow these steps to update a user’s DB password via Infor Lawson Cloudsuite

- Login to your concierge account and go to Cloudsuite app (this assumes you have access to this else open a ticket with Infor).

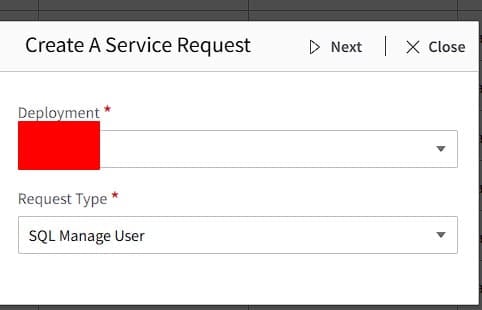

- Create a Service Request

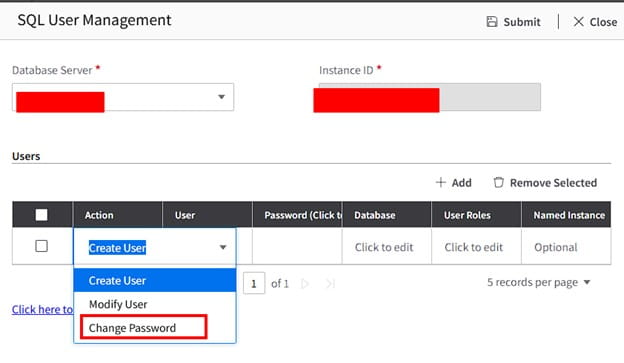

- Select Deployment and Request Type: SQL Manage User

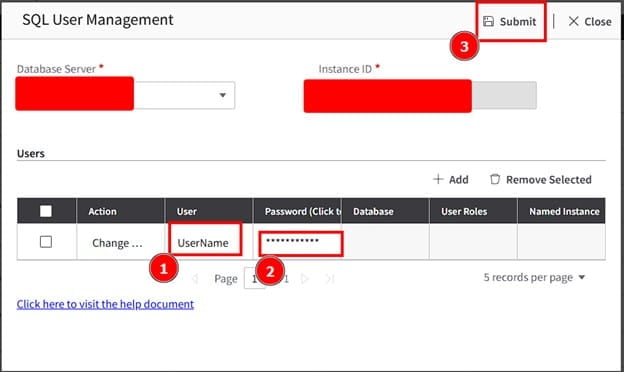

- Select Database Server and in the drop down select Change Password

- Enter username, the new password for the user, then click Submit

All done! Validate with the user. If this is something you’re need assistance managing, Nogalis offers a team of Lawson specialists within a single MSP services contract for customers. Let us know if we can assist you today.

Enterprise software is undergoing a major transformation as cloud computing reshapes how companies run their operations. In an article published by Slashdot, the publication explains how the ERP (enterprise resource planning) industry evolved—led by its original pioneer, SAP. For decades, ERP systems served as the backbone of corporate IT. Originating from manufacturing resource planning in the 1960s, ERP platforms eventually expanded to manage finance, HR, supply chains, and operations across entire organizations. But traditional ERP was notoriously complex and expensive. Deployments could take years, required dedicated hardware and specialized staff, and upgrades were risky due to heavy customization and tight connections with legacy systems. The rise of cloud computing in the early 2000s began to challenge this model. Software-as-a-service allowed businesses to rent scalable applications instead of running massive on-premise systems. Real-time data sharing, e-commerce growth, and the emergence of AI demanded faster, more flexible software—something traditional ERP struggled to deliver. Early cloud ERP solutions, including those pioneered by NetSuite, helped smaller companies adopt more agile systems. But larger organizations still faced complexity when migrating from legacy platforms. The real shift came when SAP re-engineered its flagship ERP for the cloud. Instead of an all-in-one monolithic system, SAP introduced a cloud-native architecture centered on a lightweight core that orchestrates operations while connecting to specialized applications, services, and third-party tools through APIs. This modular approach delivers several advantages: faster innovation, easier configuration instead of heavy customization, and scalability that works for both small businesses and global enterprises. In effect, ERP is evolving into something broader—a cloud platform that unifies data, applications, and AI across the enterprise. The main takeaway is this: cloud computing didn’t just modernize ERP—it fundamentally redefined it.

When working with MySQL, you might notice something surprising: a query that runs quickly on its own suddenly becomes slow when you try to save the results into a temporary table.

For example, let’s say you run a SELECT query that completes in about two minutes. But when you try to capture those results with:

CREATE TEMPORARY TABLE table_name

SELECT field1, field2, field3;

the process hangs, forcing you to kill it after five minutes. What’s going on here?

Why It Happens

When you run CREATE TEMPORARY TABLE … SELECT, MySQL is actually doing two jobs:

- Running the SELECT – fetching rows from the source tables, applying filters, joins, and any transformations.

- Creating and populating the temporary table – defining a new schema on the fly and writing the rows into it.

That second step adds overhead. Here are the most common reasons for the slowdown:

- Data Volume: Copying millions of rows into a new table takes time, even if the query itself executes quickly.

- Indexes: Your source query might benefit from indexes, but inserts into the temporary table don’t—MySQL has to write all rows one by one.

- Storage Engine: By default, temporary tables may spill to disk if they exceed memory limits, which is much slower.

- Server Resources: CPU, memory, and I/O pressure can cause inserts into the temp table to crawl.

- Locking/Concurrency: Other queries on the same data can introduce waits and contention.

Creating a Temporary Table in Memory

If your dataset isn’t too large, you can speed things up by creating the temporary table in memory instead of on disk. MySQL supports this via the MEMORY storage engine:

CREATE TEMPORARY TABLE temp_in_memory (

id INT,

name VARCHAR(255)

) ENGINE=MEMORY;

Then, you can insert data directly into this in-memory structure:

INSERT INTO temp_in_memory

SELECT field1, field2

FROM source_table;

Because the table lives in RAM, inserts and reads are much faster—though the tradeoff is size limits (governed by the max_heap_table_size and tmp_table_size settings).

Best Practices

If you’re seeing big slowdowns when creating temporary tables, try:

- Reviewing and optimizing your SELECT query with proper indexing.

- Using ENGINE=MEMORY if your temp dataset fits in RAM.

- Monitoring resource usage during the query (SHOW PROCESSLIST, or performance schema tables).

- Splitting large inserts into smaller batches.

- Making sure the temp table schema only includes the columns and datatypes you really need.

Takeaway

A SELECT query that runs fast doesn’t always translate to a fast CREATE TEMPORARY TABLE. The extra overhead of creating and writing the table can introduce new bottlenecks. If you only need the results for lightweight, session-specific work, an in-memory temporary table (ENGINE=MEMORY) can be a game-changer.

By understanding how MySQL handles temporary tables under the hood, you can choose the right approach and keep your queries moving fast.

Key Questions Every Organization Should Ask Before Choosing a Lawson Data Archive Platform

Retiring a legacy Lawson system can significantly reduce infrastructure costs and eliminate the burden of maintaining aging ERP servers. However, once Lawson is retired, the archive platform becomes the long-term system of record for historical financial and operational data.

Before selecting a solution, organizations should verify the following areas.

- Security & Compliance

☐ Does the platform support encryption at rest and in transit?

☐ Does it integrate with your existing identity provider (SSO/SAML)?

☐ Are role-based permissions available to control user access?

☐ Does the system maintain detailed audit logs of all user activity?

☐ Has the vendor undergone SOC 2 or equivalent security assessments?

☐ Can administrators manage user access policies centrally?

- Data Volume & Performance

☐ Has the solution been tested with full production-scale Lawson datasets?

☐ Can it handle 10+ years of transactional history without performance issues?

☐ Are query results returned quickly even with billions of records?

☐ Is the architecture designed to scale as data volumes grow over time?

- Query Coverage & Data Accessibility

☐ Does the platform support detailed queries across major Lawson modules?

Examples include:

☐ General Ledger (GL)

☐ Accounts Payable (AP)

☐ Vendor Records

☐ Payroll History

☐ Procurement Data

☐ Transaction Distributions

☐ Can users access transaction-level detail, not just summary reports?

☐ Are query screens designed to mirror familiar Lawson workflows?

- Long-Term Platform Sustainability

☐ Does the architecture eliminate the need to maintain legacy Lawson servers?

☐ Is the platform built on modern cloud infrastructure?

☐ Does the vendor have a clear long-term roadmap for the product?

☐ Will the platform remain viable for 10–20 years of data retention?

- Governance & Audit Readiness

☐ Can auditors retrieve historical transactions quickly and easily?

☐ Are access logs and user activity recorded for compliance reviews?

☐ Can organizations demonstrate clear data stewardship and governance?

☐ Does the platform support internal and external audit processes?

Final Consideration

Retiring Lawson is often a once-in-a-decade decision. The archive platform selected will become the system your organization relies on to access historical financial and operational data for years to come.

Ensuring the platform meets the requirements above helps protect your organization from costly surprises after the legacy ERP has been retired.

Save this checklist and follow this guide before choosing a Lawson Archive Platform:

Problem:

User does not have access to LBI. See screenshot below.

Resolution:

First, go to Mingle/InforOS, then go to manage users, and then search for the user.

Next, click on the arrow shown below which will take you to the user profile.

Click on the Roles tab:

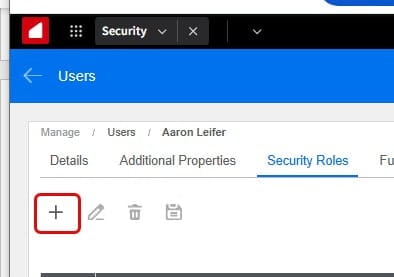

Then click on the + to add roles:

Next, search for Mingle Role for LBI and click on the +Add & Close:

Finally, Click the Save icon. And you should be good!

Across state and local governments, Lawson environments that have supported financial, HR, and procurement operations for decades are approaching retirement. Maintaining aging ERP infrastructure simply to access historical data is costly and increasingly difficult to justify.

Archiving Lawson data allows agencies to retire the legacy application while preserving access to historical records. However, not all archive platforms are designed to support the transparency, auditability, and long-term retention requirements of the public sector.

Before selecting a Lawson archive solution, government organizations should verify the following five areas.

- Security and Access Governance

Public sector systems often contain sensitive financial, employee, and vendor information. Even when data is historical, agencies must maintain strict controls over who can access it.

A Lawson archive platform should support:

- Role-based access control

- Integration with government identity providers (SSO/SAML)

- Encryption at rest and in transit

- Detailed audit logs of user access

- Clear separation of duties for administrators and users

These controls ensure agencies can maintain proper governance over historical data while meeting security requirements.

- Proven Performance with Large Historical Data Sets

Government Lawson systems often contain decades of transactional history. Financial systems may include millions—or even billions—of records across modules such as:

- General Ledger

- Accounts Payable

- Procurement

- Payroll

- Vendor records

- Distribution transactions

Archive platforms should be tested with full production-scale datasets, not just sample data. Agencies should confirm that queries remain responsive even when accessing large volumes of historical transactions.

- Transparency and Accessibility of Public Records

Public sector organizations have unique transparency requirements. Historical financial data may need to be retrieved for:

- Public records requests

- Legislative inquiries

- Internal investigations

- Financial reporting

An archive platform should allow authorized users to quickly locate detailed transaction records without requiring technical expertise.

The system should provide intuitive query capabilities and data views that mirror the structure users were familiar with in Lawson.

- Long-Term Sustainability of the Platform

Government data retention requirements can extend for many years. In some cases, financial and personnel records must remain accessible for decades.

An archive platform should be built on modern infrastructure that ensures long-term accessibility without requiring agencies to maintain outdated hardware or legacy software environments.

Organizations should verify that the platform architecture supports long-term sustainability and can evolve alongside modern cloud technologies.

- Audit Readiness and Financial Oversight

Public sector organizations operate under significant oversight from auditors, regulators, and governing bodies. Historical financial records must remain readily available to support audits and reviews.

A Lawson archive platform should provide:

- Comprehensive audit trails

- The ability to retrieve detailed transaction records

- Clear tracking of who accessed data and when

- Reliable support for financial and compliance audits

Maintaining this level of audit readiness is essential to ensuring continued accountability and transparency.

Final Thoughts

Retiring a legacy Lawson system can significantly reduce operational complexity and infrastructure costs for government organizations. However, the archive platform chosen becomes the long-term repository for historical financial and operational records.

Ensuring the platform supports strong security, scalability, transparency, sustainability, and audit readiness helps protect both the organization and the public interest.

Careful evaluation at the outset can prevent challenges years down the road.