When migrating schemas to MySQL using the AWS Schema Conversion Tool (SCT), you may encounter the error:

“602: Converted tables might exceed the row-size limit in MySQL.”

This warning means that one or more tables being converted could exceed MySQL’s row-size limit. For the InnoDB storage engine (default in MySQL and Amazon Aurora MySQL), the maximum row length is 65,535 bytes. If your converted table design goes beyond this, MySQL will reject it.

Why This Happens

- Large VARCHAR or TEXT columns

- Too many columns in a single table

- Wide columns that, when combined, exceed the 64KB limit

- Non-optimized data types during schema conversion

Fixing the Issue

- Review and Optimize Column Data Types

- Reduce overly large VARCHAR lengths. For example:

- VARCHAR(1000) → VARCHAR(255) if values never exceed that length.

- Replace TEXT with smaller types (TINYTEXT, MEDIUMTEXT) where possible.

- Use integers (INT, SMALLINT, TINYINT) instead of BIGINT if values fit.

- Normalize the Table

If your schema has too many wide columns:

- Split them into multiple related tables

- Use foreign keys to maintain relationships

- Store large blobs of data separately

- Drop Unnecessary Columns

Remove unused or redundant columns before migration to shrink the row size.

- Consider Storage Engine Options

- InnoDB enforces the row-size limit but stores large TEXT/BLOB columns off-page (only a 20-byte pointer remains in the row).

- If you only need full-text lookups, splitting those into reference tables may help.

- Change InnoDB Page Size (Advanced)

By default, InnoDB uses a 16KB page size. MySQL allows compilation with different page sizes (4KB, 8KB, 16KB, 32KB, 64KB).

⚠️ Important: This cannot be changed dynamically. You must recompile MySQL from source with a custom setting.

- Download MySQL source

- Modify the UNIV_PAGE_SIZE definition

- Rebuild and reinstall MySQL

- Re-import your schema and data

This approach is rarely used in practice because it complicates upgrades and maintenance, and is not supported on managed services like Amazon RDS or Aurora MySQL.

Best Practice in AWS Context

Since you’re working with AWS SCT and likely using Aurora MySQL or RDS, you cannot change InnoDB page size. Instead, the best fix is to:

- Normalize your schema

- Adjust column definitions

- Split oversized tables

Takeaway

The 602 error in AWS SCT isn’t a hard failure—it’s a warning that your schema design may hit MySQL’s row-size limits. While increasing InnoDB page size is technically possible in self-managed MySQL builds, on AWS-managed databases the right solution is schema redesign and column optimization.

✅ Rule of thumb: If you’re migrating from Oracle, SQL Server, or DB2 into MySQL, review the largest tables carefully. Wide schemas that were fine in those engines may not fit in MySQL’s stricter row limits.

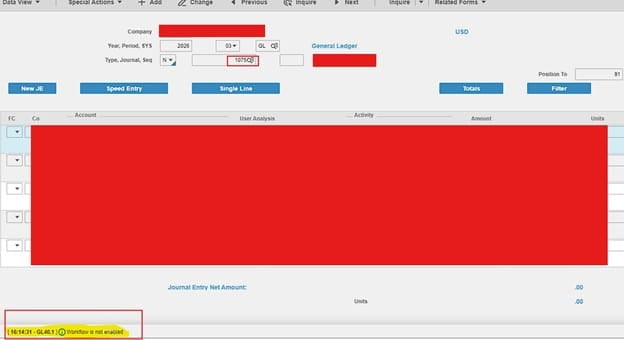

Problem:

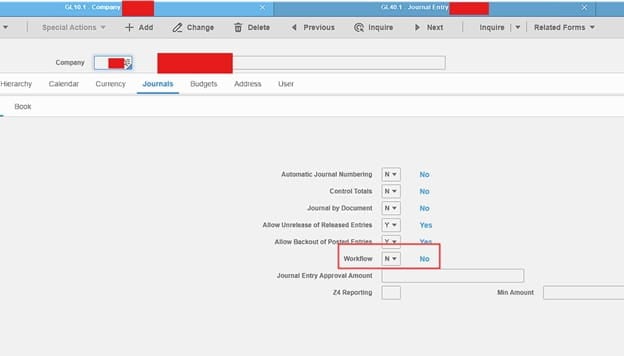

You’re trying to release a Journal Entry on GL40.1 but getting a “Workflow is not enabled” error (see example screenshot below):

Solution:

This is a simple resolve. First, on GL10.1, inquire on the company and under the Journals tab, set workflow to No >> Click Change

Go back to GL40.1 and release journal entry. This should remove the error and fix things. You’re done!

NOTE: This issue may happen when your GL10.1 form is a custom form and has customizations for the Workflow field.

If you receive this message when working with Lawson Security Administrator:

“The version of this file is not compatible with the version of Windows you’re running. Check your computer’s system information to see whether you need an x86 (32-bit) or x64 (64-bit) version of the program.”

Corrective action

The LSA tool should be able to run on both 32-bit and 64-bit systems. Take these steps to check to see if this message is related to a different operating system issue.

- Restart your Operating system.

- Log in to computer as an Administrator> Run LSA installer as an Administrator > Proceed with LSA installation.

- If the error message occurs again, the LSA download file might be corrupted. Download the latest version of the security administration tool and repeat step 2.

- If you continue to get the same error, right-click the LSA installation program > Select Troubleshoot Compatibility > Click Try Recommended Setting > Click Start the Program > Proceed with the LSA installation.

- If the installation is still not successful, contact Infor Support.

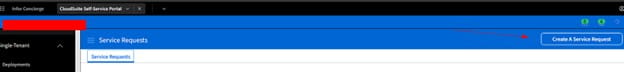

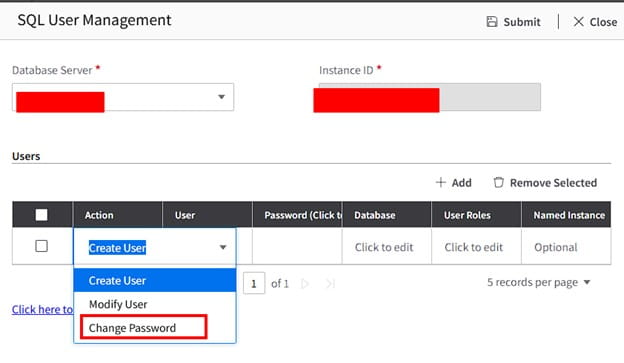

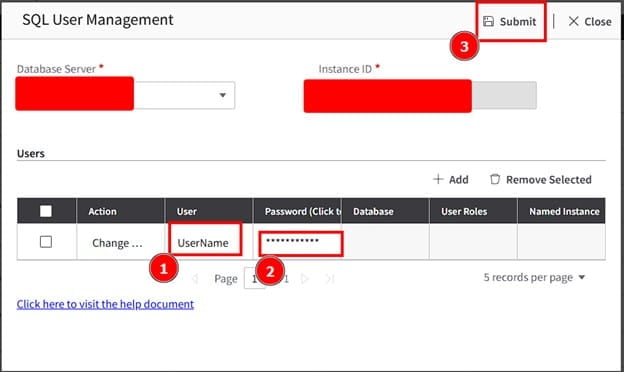

Follow these steps to update a user’s DB password via Infor Lawson Cloudsuite

- Login to your concierge account and go to Cloudsuite app (this assumes you have access to this else open a ticket with Infor).

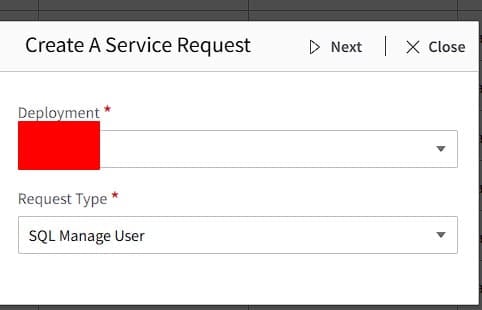

- Create a Service Request

- Select Deployment and Request Type: SQL Manage User

- Select Database Server and in the drop down select Change Password

- Enter username, the new password for the user, then click Submit

All done! Validate with the user. If this is something you’re need assistance managing, Nogalis offers a team of Lawson specialists within a single MSP services contract for customers. Let us know if we can assist you today.

When working with MySQL, you might notice something surprising: a query that runs quickly on its own suddenly becomes slow when you try to save the results into a temporary table.

For example, let’s say you run a SELECT query that completes in about two minutes. But when you try to capture those results with:

CREATE TEMPORARY TABLE table_name

SELECT field1, field2, field3;

the process hangs, forcing you to kill it after five minutes. What’s going on here?

Why It Happens

When you run CREATE TEMPORARY TABLE … SELECT, MySQL is actually doing two jobs:

- Running the SELECT – fetching rows from the source tables, applying filters, joins, and any transformations.

- Creating and populating the temporary table – defining a new schema on the fly and writing the rows into it.

That second step adds overhead. Here are the most common reasons for the slowdown:

- Data Volume: Copying millions of rows into a new table takes time, even if the query itself executes quickly.

- Indexes: Your source query might benefit from indexes, but inserts into the temporary table don’t—MySQL has to write all rows one by one.

- Storage Engine: By default, temporary tables may spill to disk if they exceed memory limits, which is much slower.

- Server Resources: CPU, memory, and I/O pressure can cause inserts into the temp table to crawl.

- Locking/Concurrency: Other queries on the same data can introduce waits and contention.

Creating a Temporary Table in Memory

If your dataset isn’t too large, you can speed things up by creating the temporary table in memory instead of on disk. MySQL supports this via the MEMORY storage engine:

CREATE TEMPORARY TABLE temp_in_memory (

id INT,

name VARCHAR(255)

) ENGINE=MEMORY;

Then, you can insert data directly into this in-memory structure:

INSERT INTO temp_in_memory

SELECT field1, field2

FROM source_table;

Because the table lives in RAM, inserts and reads are much faster—though the tradeoff is size limits (governed by the max_heap_table_size and tmp_table_size settings).

Best Practices

If you’re seeing big slowdowns when creating temporary tables, try:

- Reviewing and optimizing your SELECT query with proper indexing.

- Using ENGINE=MEMORY if your temp dataset fits in RAM.

- Monitoring resource usage during the query (SHOW PROCESSLIST, or performance schema tables).

- Splitting large inserts into smaller batches.

- Making sure the temp table schema only includes the columns and datatypes you really need.

Takeaway

A SELECT query that runs fast doesn’t always translate to a fast CREATE TEMPORARY TABLE. The extra overhead of creating and writing the table can introduce new bottlenecks. If you only need the results for lightweight, session-specific work, an in-memory temporary table (ENGINE=MEMORY) can be a game-changer.

By understanding how MySQL handles temporary tables under the hood, you can choose the right approach and keep your queries moving fast.

Problem:

User does not have access to LBI. See screenshot below.

Resolution:

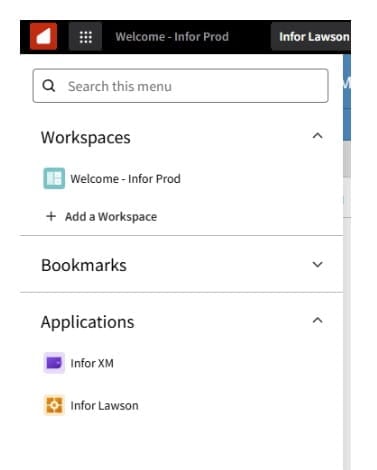

First, go to Mingle/InforOS, then go to manage users, and then search for the user.

Next, click on the arrow shown below which will take you to the user profile.

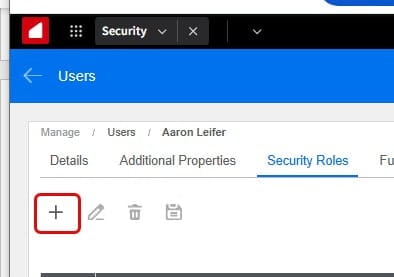

Click on the Roles tab:

Then click on the + to add roles:

Next, search for Mingle Role for LBI and click on the +Add & Close:

Finally, Click the Save icon. And you should be good!

Lawson File Channels can be useful when importing files from a defined local Landmark directory or a remote one via FTP, SFTP etc. They are especially useful when a file from an outside organization is delayed. Unlike a IPA scheduler that only runs on a set schedule, a file channel will pick up a file that has been delayed for reasons outside of your organizations control.

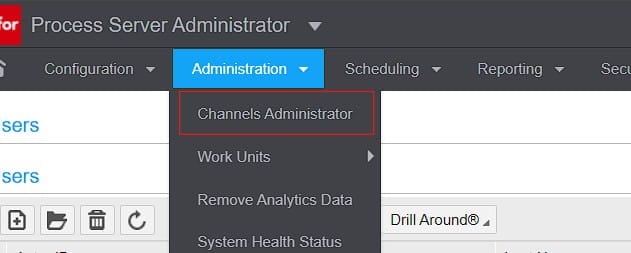

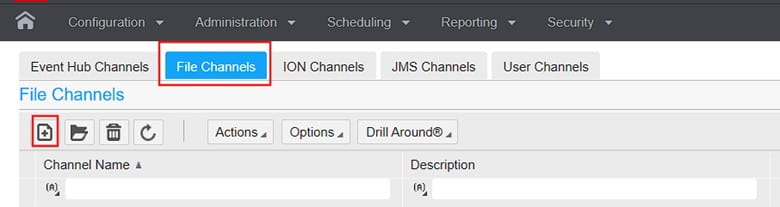

First in Process Server Administrator, you need to go to Administrator >> Channels Administrator (assuming you have permissions)

Then create a new File Channel:

Now that you know how to create one, File Channels essentially act as a source directory path that is scanned every X minutes to then search for a file specified by a File Channel Receiver.

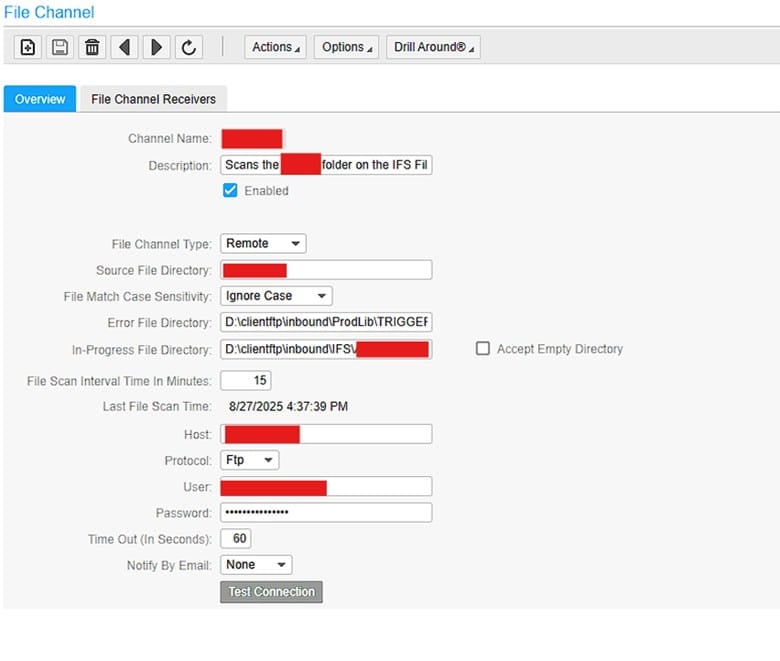

Below is an example of a File Channel searching a remote server via FTP:

Since the above is an FTP directory, it has a default directory upon connection. You then define the Source File Directory after that. If the FTP server connects and starts on ..\inbound directory, and your source directory is ..\inbound\APFinance, then Source File Directory is simple APFinance

Error file and In-Progress File Directory are always directories on the local Landmark server so make sure you establish those directories first.

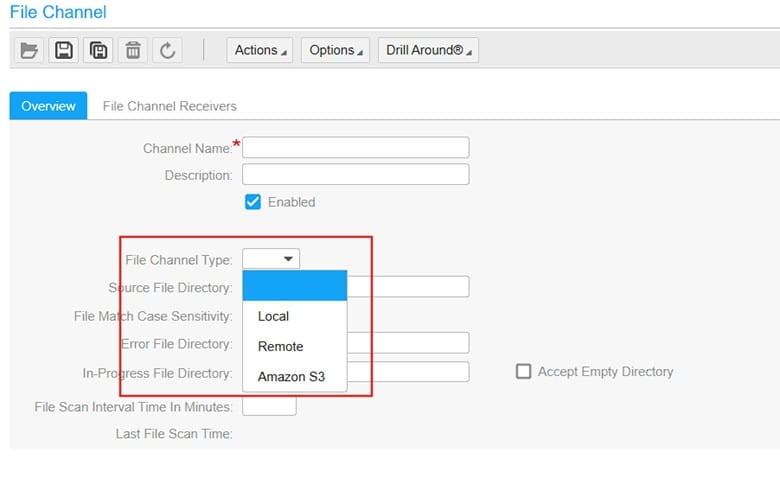

Additionally, you can change the File Channel Type. Local is Landmark, Amazon S3 is a specified Amazon server instance:

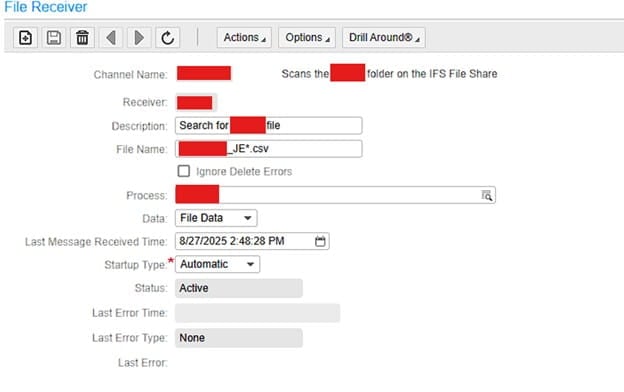

All other Parameters are self-explanatory so let’s move on to File Channel Receivers.

You can have multiple File Channel Receivers defining different file names for every File Channel. This way if you have one Source Directory, you can search different file names like ACH_*.txt, APC_*.xml etc.

The “Process” field is the IPA process that will be processing the file.

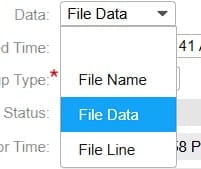

The Data field is also important. File Data is the ideal selection.

File Name: Triggers one workunit with just the file name.

File Data: Triggers one workunit with the file’s entire contents.

File Line: Triggers a separate workunit for each line of the file.

Once the file is picked up, it moves it to the in-progress directory while it gets processed regardless the the Data field setting. The in-progress directory acts like an archive folder in this way.

That’s it, File Channels are great when receiving a file from an outside organization that sends it on a schedule. That way if it happens to be delayed, the File Channel will still scan it when it finally gets to Lawson.